Gemma 3, the new Google model for developers

On 12 March 2025, Google launched Gemma 3, its new generation of open-source AI models for developers. Following on from Gemini 2.0, this model demonstrates a desire to democratise access to high-performance AI. But what has changed since the first version of Gemma? For the web giant, this new model promises greater performance, enhanced security and better resource optimisation. Let’s take a closer look.

What has changed with Gemma 3?

With Gemma, Google aims to help developers integrate artificial intelligence into their digital solutions. But with AI advancing at breakneck speed, the tools that underpin it must also improve. That is the goal of this new version.

New features

In order to keep pace with AI, Gemma 3 is improving with new features.

- Exceptional language coverage: the model supports over 140 languages in total, 35 of which are fully optimised upon installation. Developers can now create international applications for a wider audience.

- Integrated multimodal capabilities: beyond word processing, the new AI model now analyses images and short videos.

- Extended contextual memory: with its 128,000-token context window, Gemma 3 can process large documents and maintain consistency in its responses. This is true regardless of the level of complexity and detail required.

- Function calls: by supporting structured outputs, AI facilitates task automation and the creation of dynamic user experiences.

- Official quantified versions: Google offers optimised versions that reduce digital resource requirements while maintaining a high level of accuracy.

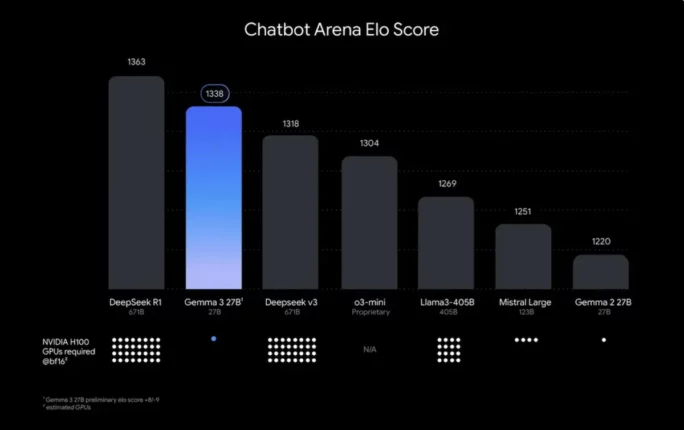

It should also be noted that Google’s latest AI model outperforms the competition, including Llama-3 8B, DeepSeek-V3, and o3-mini. Computing power is optimal, as is the user experience (even on a single GPU or TPU).

Enhanced security

Even though Gemma 3 is an open source model, Google has not forgotten about security requirements. To ensure maximum protection and ethics, the company has introduced ShieldGemma 2, a sophisticated image verification system. This security feature is capable of automatically identifying and filtering problematic content by classifying it into three main categories: dangerous content, sexually explicit content, and violence.

But be careful not to create rules that are too strict, as this could stifle innovation. That’s why Google allows developers to customise settings according to their own criteria.

Between innovation and protection, the development of Gemma 3 involves strict data governance and thorough evaluation testing. The result is a responsible open-source approach that could become the new standard.

How to use Gemma 3?

Google has redesigned the adoption process to enable all developers to use this model, regardless of their level of expertise. So, in practical terms, how do you use it? Here are the different steps:

Step 1 – Explore: You can use it directly in your browser or use an API key from Google AI Studio.

Step 2 – Customise: start by downloading the Gemma 3 models from Hugging Face, Ollama or Kaggle, before adjusting them to suit your needs and environment.

Step 3 – Deploy: The new version integrates with your favourite scaling tools, such as Vertez AI, Cloud Run, or NVIDIA.

Want to use Gemma 3? Feel free to contact our SEO agency to optimise your digital tools.